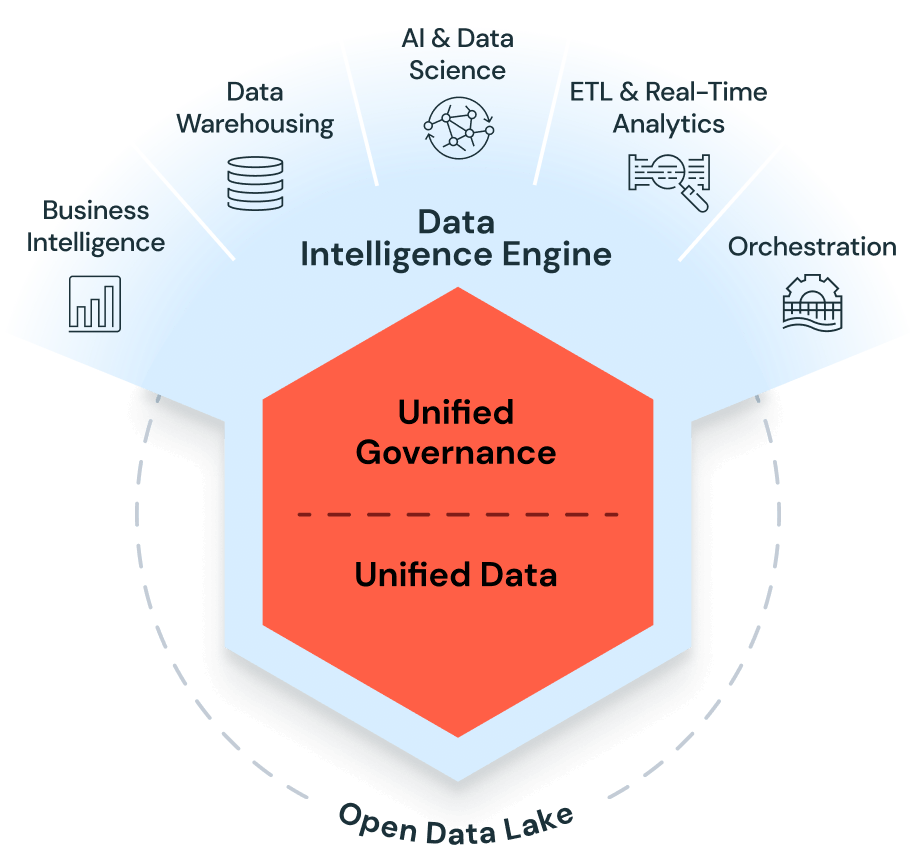

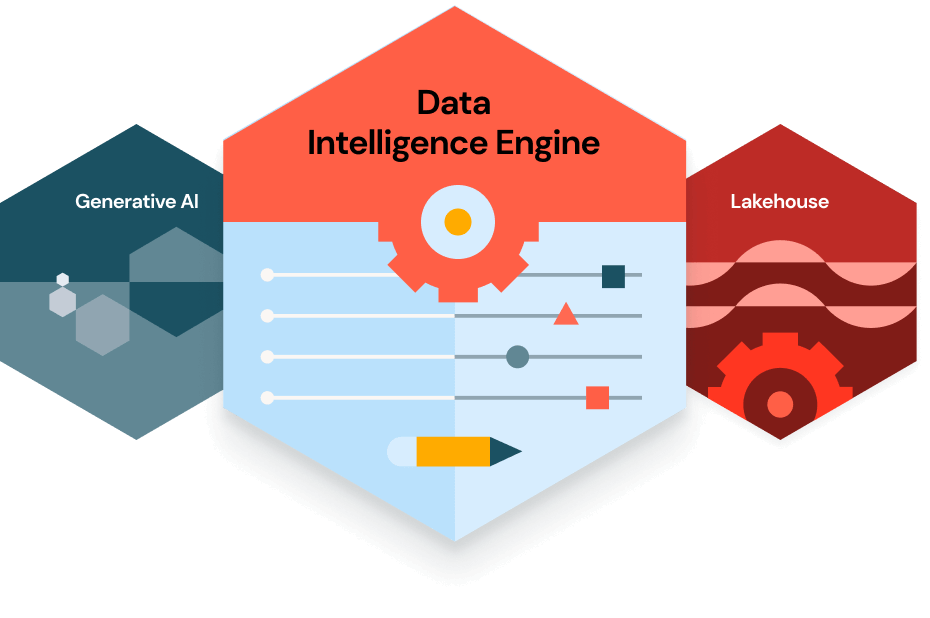

Data service establishes the foundational infrastructure required for high-performance analytics and AI. It involves building robust pipelines to ingest, transform, and aggregate raw data from disparate sources into clean, reliable datasets. By prioritizing data integrity, scalability, and latency, this discipline ensures that information is structured and accessible, enabling data scientists and AI models to derive actionable insights within a secure, enterprise-grade environment.

This service facilitates the secure, governed exchange of datasets between internal departments or external partners. By utilizing Privacy-Enhancing Technologies (PETs) and cloud-based data exchanges, organizations can unlock collaborative insights and monetize data assets without compromising underlying security or regulatory compliance.

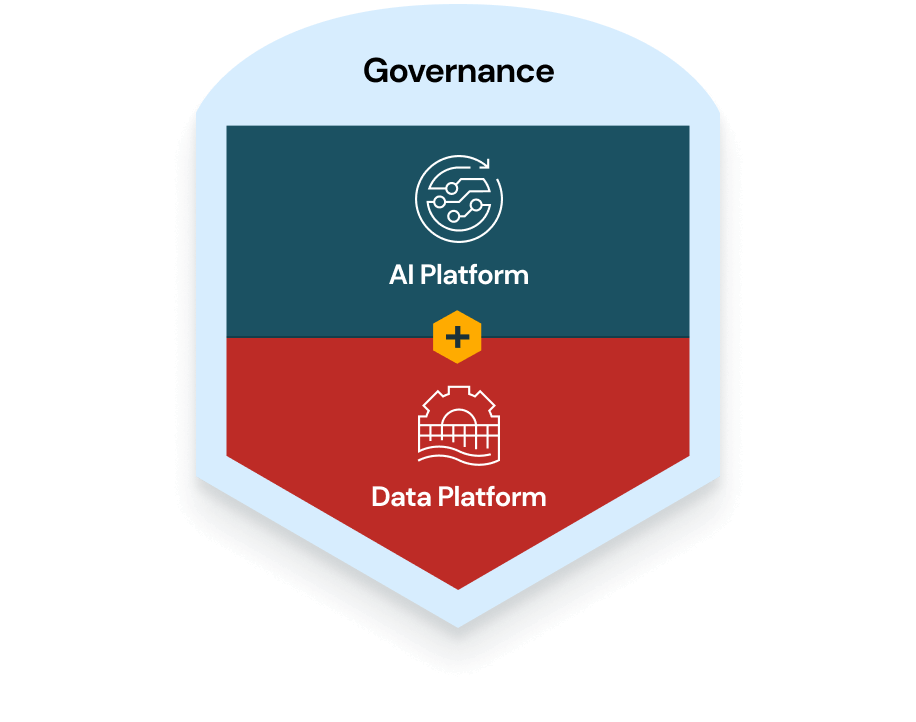

This framework establishes the policies, standards, and accountability required to ensure data quality and compliance. It involves implementing automated metadata management, data lineage tracking, and access controls, transforming data from a raw liability into a trusted, audit-ready corporate asset for strategic decision-making.

A centralized repository designed to aggregate vast amounts of structured data from disparate sources. By utilizing OLAP (Online Analytical Processing) architectures, it provides a "single source of truth" for enterprise reporting, enabling high-performance historical analysis and complex business intelligence queries at scale.

This application focuses on integrating machine learning directly into data architectures. It automates data preparation, utilizes AI-driven anomaly detection to maintain data health, and creates intelligent pipelines that self-optimize, ensuring that the data ecosystem is inherently capable of supporting advanced predictive modeling.

This discipline applies statistical modeling, algorithms, and exploratory analysis to extract actionable business insights. By identifying hidden patterns and correlations within complex datasets, it enables organizations to solve specific business problems, optimize operational efficiency, and develop high-value predictive capabilities.

This service enables the continuous ingestion and processing of data as it is generated. Utilizing technologies like Apache Kafka, it allows businesses to react instantly to live events—such as fraud detection or supply chain shifts—by providing sub-second latency for critical operational intelligence.