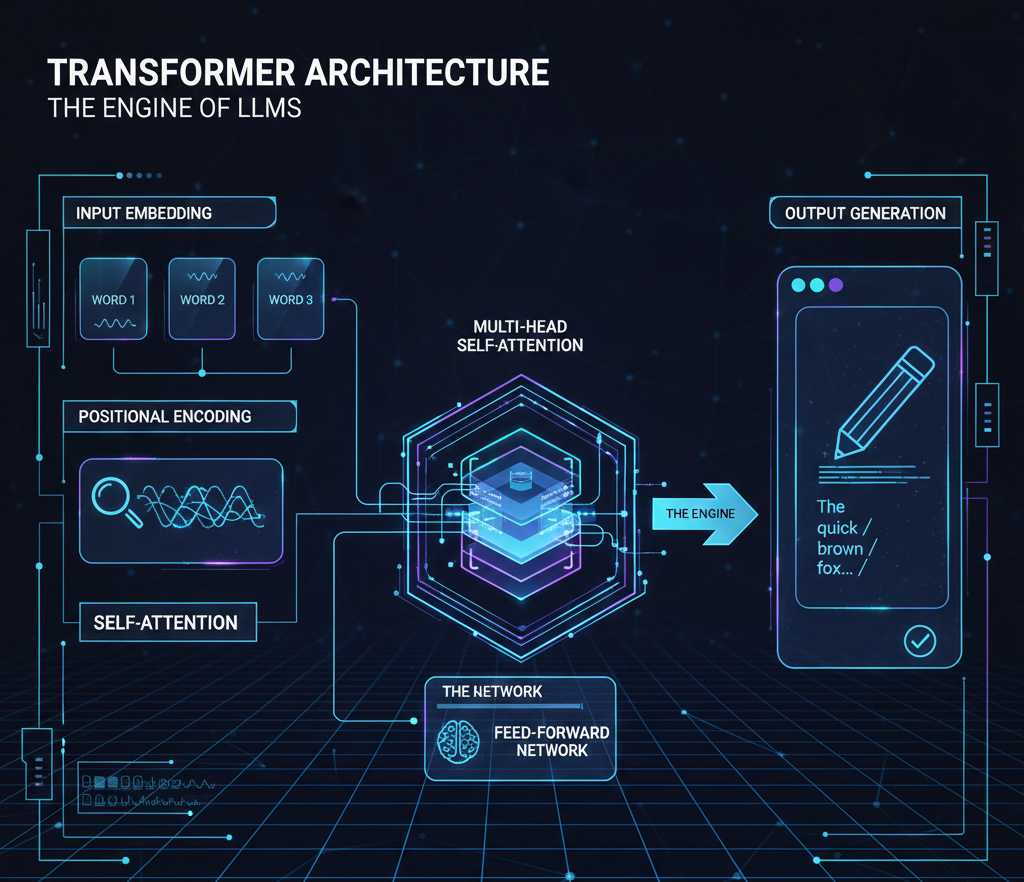

Large Language Models (LLMs) are advanced artificial intelligence systems designed to understand, interpret, and generate human-like text at an extraordinary scale. Built primarily on the Transformer architecture, these models are "large" due to their massive datasets and billions of parameters, which allow them to recognize intricate patterns in language, logic, and even computer code.

By undergoing extensive pre-training on diverse internet-scale text, LLMs learn to predict the next word in a sequence, enabling them to perform complex tasks such as creative writing, language translation, and strategic reasoning. Beyond simple chatbots, they serve as the foundational engines for modern generative tools, transforming how humans interact with information. Their ability to process context and nuance makes them a pivotal breakthrough in achieving more natural and versatile machine intelligence.

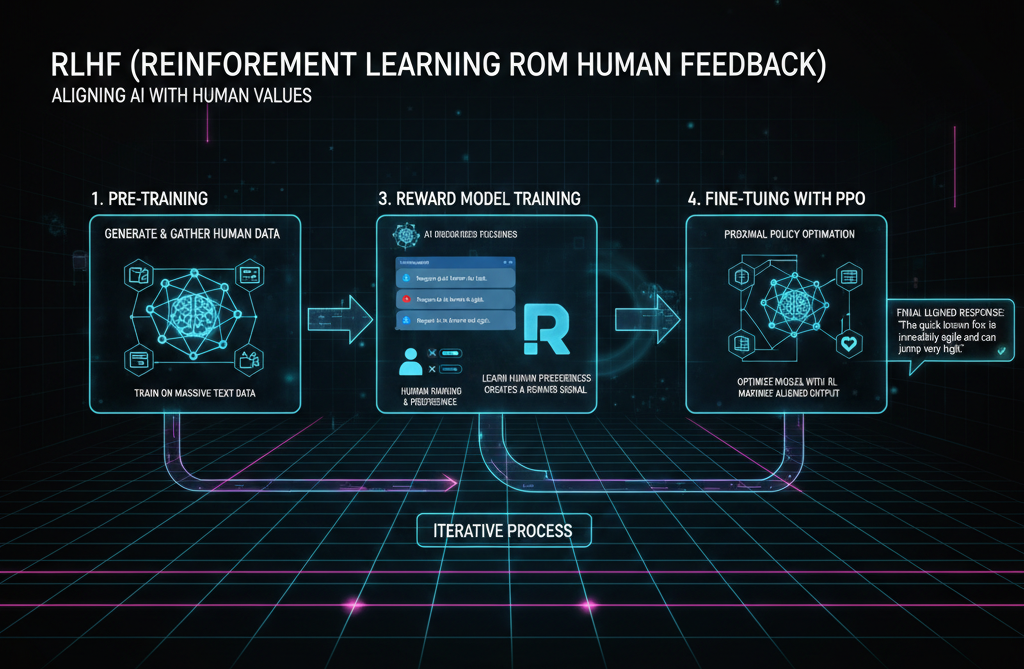

This is the "alignment" technology that makes LLMs conversational and safe. Raw models often produce repetitive or unhelpful text; RLHF involves human trainers ranking model responses to teach the AI what a "good" answer looks like. It is what turned the raw GPT technology into the helpful assistant.

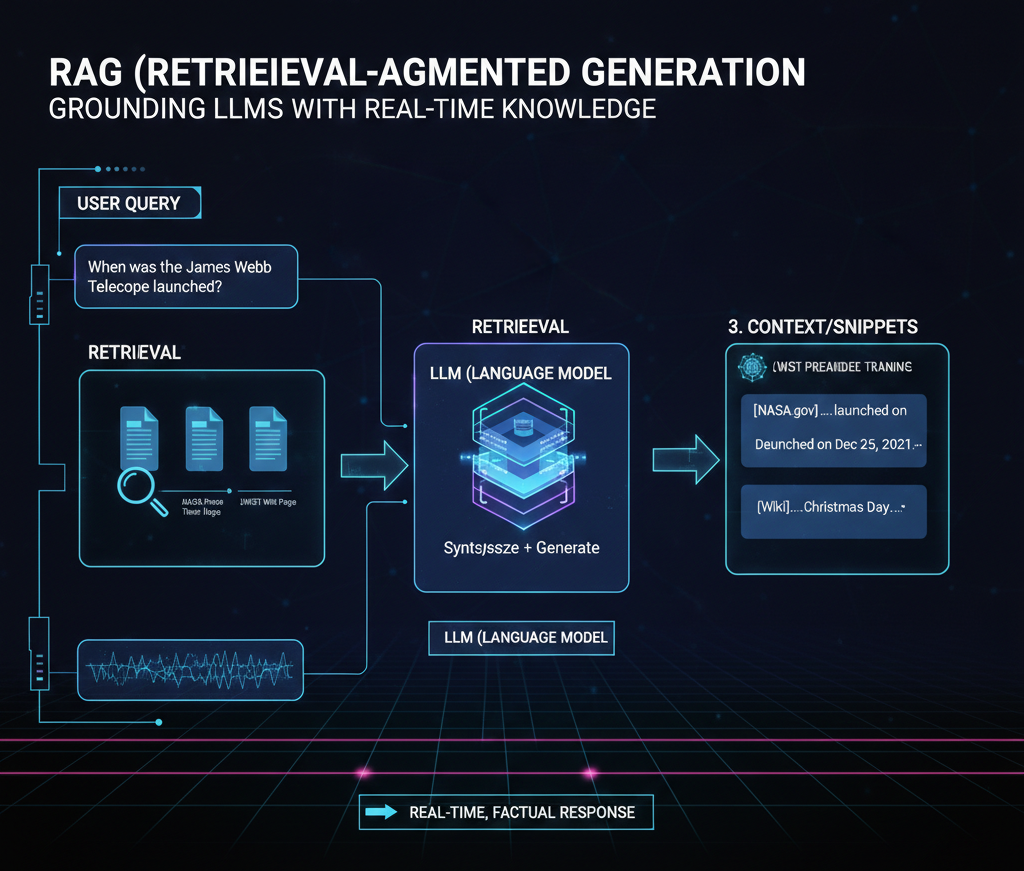

RAG is the most important technology for solving the "hallucination" problem. It allows the model to look up information from a trusted external source (like a PDF or a database) before answering. By grounding the response in real-time facts, it ensures the model doesn't just "guess" based on its training data.